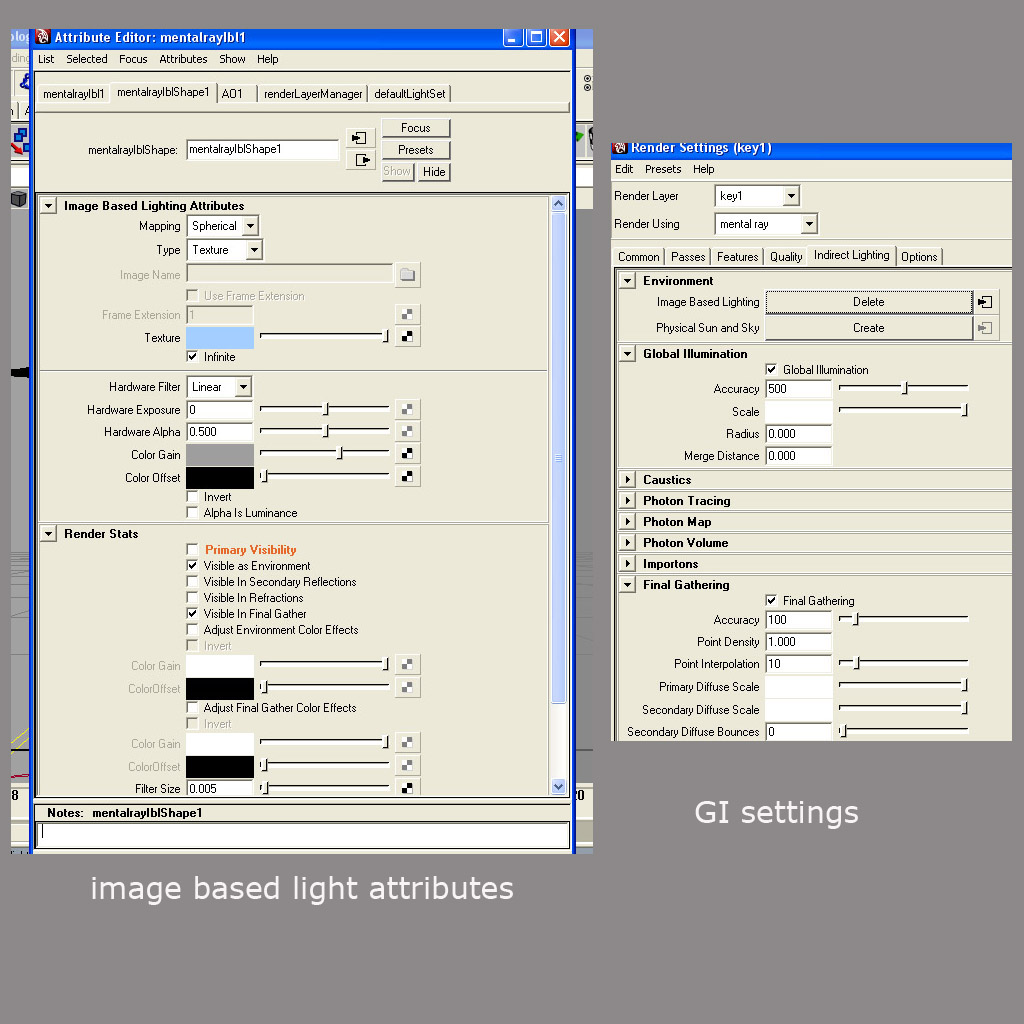

At run-time, if a pass is set on the renderer, the standard rendering code is bypassed in the render pass is used instead. diffuse and specular events separately.Īn example writing the radiance and an optional albedo and another optional normal vector for the primary hit to individual output buffers can be found in my intro_denoiser example. To attach the main render pass to a vtkRenderer, use void vtkRenderer::SetPass (vtkRenderPass p). This is also used for Light Path Expressions (LPE) where different surface interactions are stored into different channels, e.g. That’s usually called Arbitrary Output Variables (AOV) and is used by many renderers implementing those. So if the alpha channel should express the coverage of fine detail or slanted edges of geometry, accumulating that would produce the more accurate results. The diffuse and alpha value would be aliased when not accumulating them, which is not only shooting different paths through the scene to approximate the rendering equation for the global illumination, but is also antialiasing the image by jittering the primary ray sample over the pixel area. You could even reuse the same output buffer, but would need to save that before launching the different launches. If the alpha should hold a binary decision of the hit or miss, that could be handled in the same launch and the accumulated radiance in many following launches. instead of jittering the fragment sample position over the pixel area you simply generate only one ray through the center of each pixel (offset (0.5, 0.5)). You could for example simply set a flag inside your launch parameters which indicates what value you want to produce and could special case that inside the ray generation program.Į.g. If you do not want to accumulate the diffuse and alpha values but only shoot a single ray for those than that could still be handled by the same pipeline. So if the diffuse and alpha values should only be set for the primary ray then you could simply store that only for that to the output buffers inside the ray generation program.

To use them, first create a new Render Texture. It renders both left and right eye images at the same time into one packed Render Texture A special type of Texture that is created and updated at runtime.

MULTIPASS RENDERING PC

You’re right, that would require to store the respective data to the per-payload inside your closest hit and miss programs and care would need to be taken to only store the results for the interactions you’re interested in. Single Pass Stereo rendering (Double-Wide rendering) Single Pass Stereo rendering is a feature for PC and Playstation 4-based VR applications. This sample executes a pair of render passes to render a views contents. If you mean to accumulate these three outputs in the same progressive way, there should be no problem handling the different outputs in a single pipeline and the same ray generation program which would simply accumulate and write the generated data into the individual output buffers for diffuse, radiance and alpha values. A render pass is a sequence of rendering commands that draw into a set of textures. The path tracer implements a progressive Monte Carlo algorithm.